Again with the reductions! I keep writing code which reduces over a collection, keeping track of more than one variable. For instance, here’s one way to find the mean of a collection of integers:

(defn mean

"A reducer to find the mean of a collection. Accumulators are [sum count] pairs."

([] [0 0])

([[sum count]] (/ sum count))

([[sum count] x]

[(+ sum x) (inc count)]))

This mean function is what Clojure calls a reducer, or a reducing function. With no arguments, it constructs a fresh accumulator. With two arguments, it combines an element of some collection with the accumulator, returning a new accumulator. With one argument, it transforms the accumulator into some final result.

We can use this reducer with standard Clojure reduce, like so:

(require '[criterium.core :refer [quick-bench bench]] '[clojure.core.reducers :as r])

(def numbers (vec (range 1e7)))

(mean (reduce mean (mean) numbers))

; => 9999999/2

Or use clojure.core.reducers/reduce to apply that function to every element in a collection. We don’t need to call (mean) to get an identity here; r/reduce does that for us:

(mean (r/reduce mean numbers))

; => 9999999/2

Or we can use transduce, which goes one step further and calls the single-arity form to transform the accumulator. Transduce also takes a transducer: a function which takes a reducing function and transforms it into some other reducing function. In this case we’ll use identity–mean is exactly what we want.

(transduce identity mean numbers)

; => 9999999/2

The problem with all of these approaches is that mean is slow:

user=> (bench (transduce identity mean numbers))

Evaluation count : 60 in 60 samples of 1 calls.

Execution time mean : 1.169762 sec

Execution time std-deviation : 46.845286 ms

Execution time lower quantile : 1.139851 sec ( 2.5%)

Execution time upper quantile : 1.311480 sec (97.5%)

Overhead used : 20.346327 ns

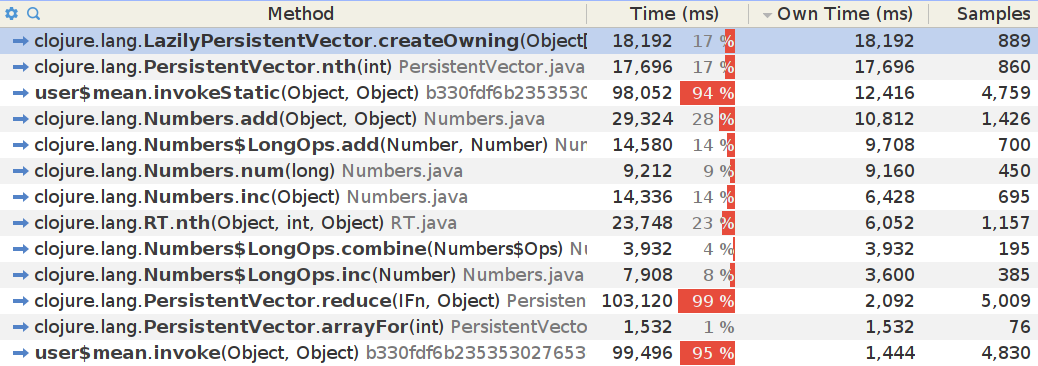

So that’s 1.169 seconds to find the mean of 10^7 elements; about 8.5 MHz. What’s going on here? Let’s try Yourkit:

Yikes. We’re burning 42% of our total time in destructuring (nth) and creating (LazilyPersistentVector.createOwning) those vector pairs. Also Clojure vectors aren’t primitive, so these are all instances of Long.

Faster Reducers

Check this out:

(require '[dom-top.core :refer [reducer]])

(def mean

"A reducer to find the mean of a collection."

(reducer [sum 0, count 0] ; Starting with a sum of 0 and count of 0,

[x] ; Take each element, x, and:

(recur (+ sum x) ; Compute a new sum

(inc count)) ; And count

(/ sum count))) ; Finally dividing sum by count

The reducer macro takes a binding vector, like loop, with variables and their initial values. Then it takes a binding vector for an element of the collection. With each element it invokes the third form, which, like loop, recurs with new values for each accumulator variable. The optional fourth form is called at the end of the reduction, and transforms the accumulators into a final return value. You might recognize this syntax from loopr.

Under the hood, reducer dynamically compiles an accumulator class with two fields: one for sum and one for count. It’ll re-use an existing accumulator class if it has one of the same shape handy. It then constructs a reducing function which takes an instance of that accumulator class, and rewrites any use of sum and acc in the iteration form into direct field access. Instead of constructing a fresh instance of the accumulator every time through the loop, it mutates the accumulator fields in-place, reducing GC churn.

user=> (bench (transduce identity mean numbers))

Evaluation count : 120 in 60 samples of 2 calls.

Execution time mean : 753.896407 ms

Execution time std-deviation : 10.828236 ms

Execution time lower quantile : 740.657627 ms ( 2.5%)

Execution time upper quantile : 778.701155 ms (97.5%)

Overhead used : 20.346327 ns

Getting rid of the vector destructuring makes the reduction ~35% faster. I’ve seen real-world speedups in my reductions of 50% or more.

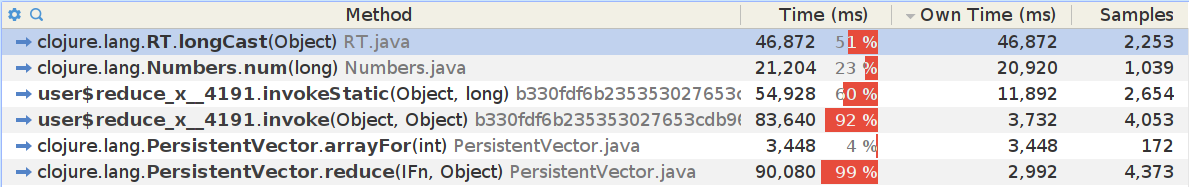

Primitives

Since we control class generation, we can take advantage of type hints to compile accumulators with primitive fields:

(def mean

(reducer [^long sum 0,

^long count 0]

[^long x]

(recur (+ sum (long x)) (inc count))

(/ sum count)))

Since reduce, transduce, etc. call our reducer with Object, rather than Long, we still have to unbox each element–but we do avoid boxing on the iteration variables, at least. A primitive-aware reduce could do better.

This gives us a 43% speedup over the standard pair reducer approach.

user=> (bench (transduce identity mean numbers))

Evaluation count : 120 in 60 samples of 2 calls.

Execution time mean : 660.420219 ms

Execution time std-deviation : 14.255986 ms

Execution time lower quantile : 643.583522 ms ( 2.5%)

Execution time upper quantile : 692.084752 ms (97.5%)

Overhead used : 20.346327 ns

Early Return

The reducer macro supports early return. If the iteration form does not recur, it skips the remaining elements and returns immediately. Here, we find the mean of at most 1000 elements:

(def mean

(reducer [^long sum 0,

^long count 0

:as acc] ; In the final form, call the accumulator `acc`

[^long x]

(if (= count 1000)

(/ sum count) ; Early return

(recur (+ sum x) (inc count))) ; Keep going

(if (number? acc) ; Depending on whether we returned early...

acc

(let [[sum count] acc]

(/ sum count)))))

For consistency with transduce, both normal and early return accumulators are passed to the final form. Because the early-returned value might be of a different type, reducer lets you declare a single variable for the accumulators in the final form–here, we call it acc. Depending on whether we return early or normally, acc will either be a number or a vector of all accumulators: [sum count].

Available Now

I’ve been using reducer extensively in Jepsen over the last six months, and I’ve found it very helpful! It’s used in many of our checkers, and helps find anomalies in histories of database operations more quickly.

If you’d like to try this for yourself, you’ll find reducer in dom-top, my library for unorthodox control flow. It’s available on Clojars.

A special thanks to Justin Conklin, who was instrumental in getting the bytecode generation and dynamic classloading reducer needs to work!

Post a Comment